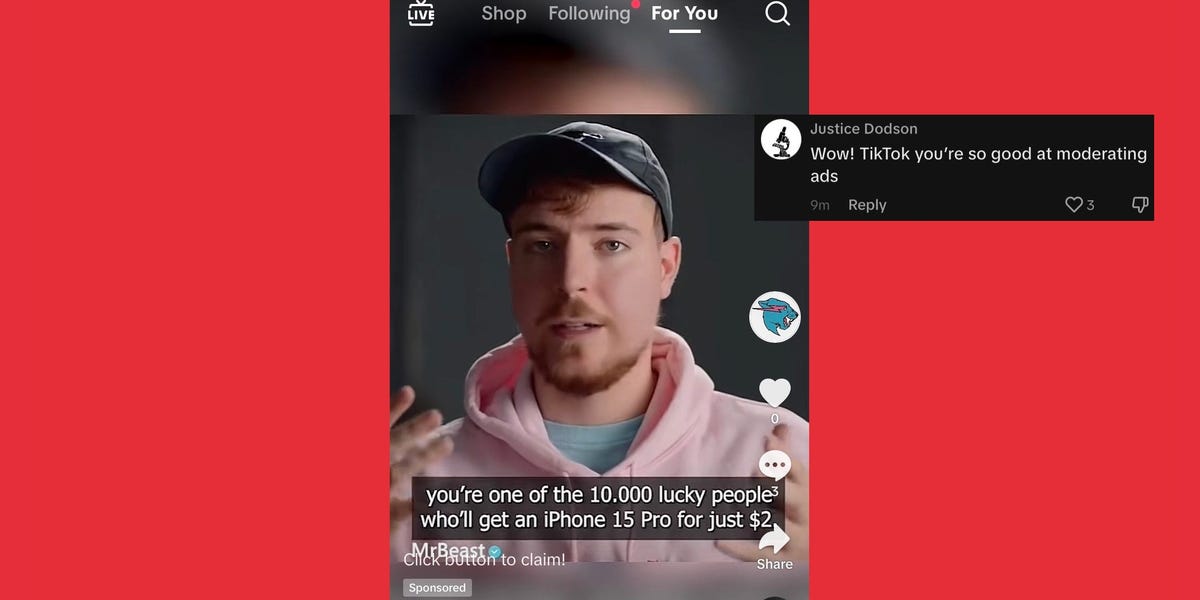

TikTok ran a deepfake ad of an AI MrBeast hawking iPhones for $2 — and it’s the ‘tip of the iceberg’::As AI spreads, it brings new challenges for influencers like MrBeast and platforms like TikTok aiming to police unauthorized advertising.

Everyone with a brain has been saying this would happen for the last decade, and yet there was no legislation put in place to target this behavior

Why does every law need to be reactionary? Why can’t we see a situation developing and get ahead of it by legislating the very obvious things it can be used for?

How about a real answer:

All but a few of our legislators have any idea how technology/Internet works. Anything about the Internet that is obvious to the crowd on lemmy will probably never cross the radar of a geriatric legislator who never needs to even write their own emails bc an aide will do it

So, the first reason is that the law likely already covers most cases where someone is using deepfakes. Using it to sell a product? Fraud. Using it to scam someone? Fraud. Using it to make the person say something they didn’t? Likely falls into libel.

The second reason is that the current legislation doesn’t even understand how the internet works, is likely amazed by the fact that cell phones exist without the use of magic, and half of them likely have dementia. Good luck getting them to even properly understand the problem, never mind come up with a solution that isn’t terrible.

The problem is that realistically this kind of tort law is hilariously difficult to enforce.

Like, 25 years ago we were pirating like mad, and it was illegal! But enforcing it meant suing individual people for piracy, so it was unenforceable.

Then the DMCA was introduced, which defined how platforms were responsible for policing IP crime. Now every platform heavily automates copyright enforcement.

Because there, it was big moneybags who were being harmed.

But somebody trying to empty out everybody’s Gramma’s chequing account with fraud? Nope, no convenient platform enforcement system for that.

You’re saying that the solution would be to hold TikTok liable in this case for failing to prevent fraud on its platform? In that case, we wouldn’t even really need a new law. Mostly just repealing or adding exceptions to Section 230 would make platforms responsible. That’s not a new solution though. People have been pushing for that for years.

DMCA wasn’t a blanket “you’re responsible now”, but defined a specific process for “this is how you demand something is taken down and the process the provider must follow”.

IANAL, but can’t MrBeast sue the ad creator company for damaging his reputation?

Good luck with that, I guess This company is gone before misterB can finish writing his lawsuit, and with it all the scammed money. But I guess there is some law forcing platforms to not promote scams, I hope, at least in some countries.

Huh TIL that the average age of the Senate and the House has steadily increased over time: https://www.nbcnews.com/data-graphics/118th-congress-age-third-oldest-1789-rcna64117

Hope more learn soon and finally vote for some young people, damit 😁✌🏻

Because no laws will pass without reason.

yet there was no legislation put in place to target this behavior

Why is the solution to every problem outlawing something?

“We need to do something about prostitution. Let’s outlaw it!”

“We need to do something about alcohol. Let’s outlaw it!”

“We need to do something about drugs. Let’s outlaw them!”

“We need to do something about gambling. Let’s outlaw it!”

All of it… a bunch of miserable failures, which have put good people in prison and turned our whole country into a goddamn police state. You can’t outlaw technology without international treaties to make sure every other country follows suit. That barely works with nuclear weapons, and only because two cities exploded by the bombs and at least a couple decades of being afraid of a nuclear apocalypse.

What the hell do you think is going to happen if we make moves on AI? China takes the lead, does what it wants, and suddenly, it’s the far superior superpower. The end.

Hell, how do we know this isn’t China propaganda running on China’s propaganda platform?

Why is legislation= outlawing to you?

Fear mongering.

How is making it illegal to steal a person’s face and make them say things they never agreed to going to make China an AI super power?

Not gonna lie my dude you have a luke warm take

It’s already illegal to impersonate someone to steal money. It’s called fraud.

AI is going to cause huge problems (I am really worried about how things are going to shake out) but I’m also not convinced writing special laws about it is going to change anything. We do need to make sure our current laws don’t have loopholes that AI can somehow exploit.

It’s fraud to use it for financial gain, however it’s not illegal to directly copy someone’s likeness for non business uses.

You can legally make videos of people saying or doing things that hadn’t, and as this technology gets more advanced we will see more of its effects. Politics will be very tricky when you can upload a video of Presidents or candidates saying literally anything.

Not only that, but make someone commit a crime on camera? Even if you aren’t trying to get them prosecuted, it could lead to severe issues. You could ruin someone’s career by making them scream slurs at someone in a Starbucks or make videos of them being abusive to their families.

You can argue its slander and libel, but where does that fall into AI? What’s the line? What if I make a joke song with someone’s voice? What if I make a joke video that has them doing horrible things? What’s the line?

Slander and libel laws don’t have clearer distinctions when it comes to AI voice and video synth

I also think there should be distinctions here. This isn’t just slapping someone’s face onto an ad with a fake quote, this is creating a video of them saying something they never said using a technology that doesn’t just inch closer, but makes leaps and bounds towards being indistinguishable from reality

You can argue its slander and libel, but where does that fall into AI? What’s the line? What if I make a joke song with someone’s voice? What if I make a joke video that has them doing horrible things? What’s the line?

Trashing someone’s reputation I would imagine, especially if they’re a public figure that relies on their reputation monetarily.

These laws require that there is intent to cause harm to a reputation

How do you deal with cases where these AI cause damage financially but you can’t prove or prosecute on intent?

IANAL, but not asking for permission to use their image/persona shows intent?

in most countries you cannot. Making a fake of someone saying something they didn’t is slander at the minimum.

It’s fraud to use it for financial gain, however it’s not illegal to directly copy someone’s likeness for non business uses.

So, never mind the fact that almost any use case is going to be for political or financial gain. Let’s just take the Mr Beast example here.

It’s an ad. It’s being used for financial gain, because it’s an ad. Either somebody is actually selling $2 iPhones (doubtful), or it’s a scam. Scams are also illegal, under various kinds of laws. Unfortunately, scams are usually committed in other countries.

This is an ad on a Chinese social media platform. Who’s going to enforce getting rid of this shit on a Chinese social media platform? Yeah, I know you’re going to point out that TikTok US is technically a US company, but we all know who really owns ByteDance and TikTok.

A federal investigation on this matter is going to point to TikTok US and then lead nowhere because the scam was created in China.

How is making it illegal to steal a person’s face and make them say things they never agreed to going to make China an AI super power?

One, fraud is already illegal, and there’s plenty of other laws to use in this situation. And none of those laws apply to other countries. A country like China doesn’t give a shit, and will gladly use AI to dupe American audiences into whatever they want to manipulate.

Two, as soon as you ask Congress to enact some law to defend against the big bad AI monster under your bed, it’s going to go one of two ways:

- They push some law that’s so toothless that it doesn’t really do anything except limit the consumer and put even more power into the corporations.

- They push a law so restrictive that other countries take advantage of the situation and develop better AI than we have. And yes, a technology this important has the ability to give one country a huge advantage.

It’s an arms race right now. Either we adapt to these situations with enforcement, education, and containment, or other countries will control our behaviors through manipulation and propaganda. More laws and legislation is not going to magically fix the problem.

Copyright infringement was also already illegal, but mass copyright infringement on major platforms didn’t really get handled until the DMCA came out with specific responsibilities for how platforms had to handle copyright infringement.

Like, if you let 15 seconds of the wrong pop-song appear in a YouTube vid they will come after you because YouTube has to avoid being liable for that infringement, but the phone companies can let scammers run rampant without consequence.

At no point did you come anywhere close to anything that can be considered a rational thought

I award you no points, and may God have mercy on your soul.

Or you could propose alternative solutions instead of building a weird straw man with sex workers and gin.

Are you seriously comparing alcohol. To people stealing someone’s likeness to commit fraud?

That’s already illegal though

If you can prove it. Which takes time and money. It would be far better to update laws to keep up with our tines and put a stop to it before it begins.

What’s being done to Mr Beast, and also Tom Hanks. Is nothing short of Identify theft.

Simpsons called it:

Oh boy. This is all moving very quickly. People already fall for simple SMS scams, I can only imagine just how many more will be falling victim to this trash in months/years to come.

People have already been falling for scams that “Elon Musk” was promoting. Naturally I’m talking about these crypto schemes run by scammers on YouTube using a deepfake of Musk. It’s been happening for about two years now.

Bill Gates has been giving away his fortune to some lucky email recipients every year now since the days when you had to pay for the internet by the hour.

Don’t even need AI video to do that. People just give away tens of thousands of dollars based on a shitty email, written in bad English, with Bill Gates’ name on it.

Just imagine fans getting a facetime call from a Taylor Swift, explaining they won half-price tickets to an exlusive fan event. Then “Taylor” has to drop out to make the other calls, but will leave them a link for the purchase - only valid for 15 minutes, as of course many others are waiting for this opportunity.

This is the entire basis of using an adblocker like ublock origin. It is purely defensive. You don’t know what an advertising (malvertising) network will deliver, and neither does the website you’re on (Tiktok, Google, Yahoo, eBay, etc etc etc). With generative AI and video ads and the lack of content checking on the advertising network this will just get worse and worse. I mean, why spend money on preventing this? The targeted ads and user data collection is where the money’s at, baby!

Related note, installing uBO on my dad’s PC some 8 years ago was far more effective than any kind of virus scanner or whatever. Allowing commerce on the Internet was a mistake. That’s the root of all this bullshit, anyway.

Fuck ads in general. I don’t care if they are legitimate or not I don’t want to be mentally assaulted every time I try to browse a website.

That too.

Allowing commerce

on the Internetwas a mistake. That’s the root of all this bullshit, anyway.That’s more accurate.

You can’t put everything back in Pandora’s Box. Right or wrong this is the world we live in. What is lacking is regulation. If left to their own devices we (royal) are shitheels. Unfortunately we (royal) are persistent shitheels which is why when we put in regulations we then strive to rip them out.

Do you mean “we (humans)”? Because “the royal we” just means “I”. Like how the queen says “we are not amused” when they mean “I don’t like it”. Related to how in many European languages (including early-modern and older English) the plural is a polite form of address (like tu and vous in French, du and sie in German, thou and you in English)

Honestly ads aren’t that bad when done right. For instance, the yellow pages had ads but they didn’t follow you wherever you went. We need ads that people want to look at. If I’m trying to find something g to buy I don’t mind looking at an ad. I just don’t want it to be everywhere and end up being malious

We need ads that people want to look at

Welcome to the pro-data-collection team! We’re wildly unpopular online.

Yellow pages is not targeted advertising. We need static content

Static content is literally the opposite of “ads people want to see”

Although I’d say a good 99% of all ads are terrible, I have yet to find any that are absolutely egregious when visiting sites like FurAffinity. Totally depends on the site and what ad services they chose or are forced into if they have ads. Also where and they are placed.

I never thought of it in security terms but that makes sense. If nothing else, having it installed makes for a better web experience.

Bro I still don’t know who MrBeast is.

Currently largest and most successful YouTuber on the platform (by a wide margin), started out by doing challenge videos about himself (24h in ice, that kinda stuff) that he’d invite friends to as the goody sidekicks causing mischief and making his challenges a little harder/more interesting.

These days, his stuff has transformed into a media powerhouse, all of it is still kinda falling into a challenge category. Now with far higher stakes and involving other people in competitions against each other - think “kids vs adults - group with most people still in the game after 5 days wins $500k” - where several days (sometimes months) of filming all gets cut down to one 10-20 minute long video.

There’s also just “look at this thing” videos like “$1 to $10,000,00 car” where him and his friends check out increasingly expensive cars until they eventually get a whole bridge cordoned off to drive in the most expensive car in the world.

He does some philanthropy, like his “plant 10 million trees” campaign and makes money through sponsorship deals and advertising his own brands - they’re currently running their own line of (fair trade?) chocolate bars that are available (in most places?) in the US, which kids will buy because of the brand recognition, leaving them with a ton of profits.

How is he simultaneously so famous and yet no one knows who he is? I feel more people would know who Linus is than him. Until about a year ago I’d never even heard the name.

I’ve never watched a Mr Beast video but it sounds like he makes a lot of content that would mainly appeal to zoomers which explain his apparent high popularity but low cultural impact

sampling bias?

Mr Beast and Skibidi toilet are two things that are viewed by millions upon millions, I’ve never watched them and neither have ever been recommended to me by youtube.

Felt like I was taking crazy pills for a while, but now I just consider myself lucky.

I believe he is a Youtuber. That’s as far as I’ve gotten.

If memory serves (being knowledge I gleaned from a podcast). He’s a YouTuber that has carved out a popular niche in philanthropy of sorts. All for views of course, but some philanthropy none the less. Very popular I think with, I want to say Gen Alpha aged kids. A lot of people have imitated the content style in the last few years. So I guess there is instant brand recognition and trust there for a lot of people.

I was confused because I thought they were talking about the LA Beast. Apparently this is not him.

Nobody fucking cares.

fwiw i read that comment and thought, ‘hmm, i don’t either’ and then i went and watched a few of his videos. they’re pretty awesome in a feel-good way. nice to see someone using tons of money to make other people happy and do good things for a change. now i’m subscribed to his channel :)

The more I hear about AI-generated content and other crap that is posted online these days, I wonder if I should just start reading books instead, maybe even learn to play on a musical instrument and leave virtual world altogether.

Plagiarized books entirely written by an AI are nor far-fetched. Get ready for a shitty reality.

Why would AI need to write a plagiarized book? Isn’t that just a knock off?

Because writing a knockoff book takes time and effort, and getting an AI to do it does not.

The same question applies to many fields. Why get AI to write a plagiarized tweet or Reddit comment? It’s a lot less effort than a book, yet both platforms are plagued with bot comments.

There is already books written entirely by AI too, AI is litterly getting everywhere

I’m sure it’s also writing the music too.

And some forum comments.

Beep boop

And some forum comments.

Good thing concert tickets aren’t overpriced and only available via resale, so I can see humans perform human music.

Keep informed so you don’t buy AI-written books. Better know what’s going on instead of letting the world happen to you imo.

Butlerian jihad sounds like a good idea rn

Yeah but then I need to hire a mentat to keep my shit straight

No.

We need the AIs to make the Men of Gold so we can compete with the murder orgy space elves.

Came to watch a fake mrbeast left dissatisfied. Came back to post a link: https://twitter.com/MrBeast/status/1709046466629554577

Click blewlowaugh now

And that is why we need a pixel poisoner but for videos.

I’m not familiar with the term, and Google shows nothing that makes sense in context. Can you explain the concept?

Here specifically it’s a technique to alter images that makes them distorted for the “perception” by generative neural networks and unusable as training data but still recognizable to a human.

The general term is https://en.wikipedia.org/wiki/Adversarial_machine_learning#Data_poisoning

One example of a tool that does this is https://glaze.cs.uchicago.edu/ but I have doubts about its imperceptibility

Yeah I’m at a loss aswell. Is it a way to prove the source of a video?

Its AI poison. You alter the data in such a way that the image is unchanged to a humans visual eye, but when imaging AI software uses the image within its sample imaging, the alterations ruin its ability to make correlations and recognize patterns.

Its toxic for the entire data set too, so it can damage the AI output of most things as long as its within the list of images used to train the AI.

That seems about as effective as those No-AI pictures artists like to pretend will poison AI data sets. A few pixels isn’t going to fool AI, and anything more than that is going to look like a real image was AI-generated, ironically.

It can seem like whatever you want it to, its already been used and has poisoned data sets.

Wake me up when orgs like Stability AI or Open AI bitch about this technology. As it stands now, it’s not even worth mentioning, and people are freely generating whatever pictures, models, deepfakes, etc. that they want.

It’s a bit unclear what you’re after here. Don’t do it unless it’s already perfect?

Why would they openly bitch about it? Thats free advertising that it works. Not to mention, you cant poison food someone already ate. They already have full sets of scrubbed data they can revert to if they add a batch thats been poisoned. They just need to be cautious about newly added data.

Its not worth mentioning if you dont understand the tech, sure. But for people who make content that is publicly viewable, this is pretty important.

It’s sort of like the captcha things. A human brain can recognize photos of crosswalks or bikes or whatever but it’s really hard to train a bot to do that. This is similar but in video format.

This is the best summary I could come up with:

TikTok ran an advertisement featuring an AI-generated deepfake version of MrBeast claiming to give out iPhone 15s for $2 as part of a 10,000 phone giveaway.

The sponsored video, which Insider viewed on the app on Monday, looked official as it included MrBeast’s logo and a blue check mark next to his name.

Two days ago, Tom Hanks posted a warning to fans about a promotional video hawking a dental plan that featured an unapproved AI version of himself.

“Realism, efficiency, and accessibility or democratization means that now this is essentially in the hands of everyday people,” Henry Ajder, an academic researcher and expert in generative AI and deepfakes, told Insider.

Not all AI-generated ad content featuring celebrities is inherently bad, as a recent campaign coordinated between Lionel Messi and Lay’s demonstrates.

“If someone releases an AI-generated advert without disclosure, even if it’s perfectly benign, I still think that should be labeled and should be positioned to an audience in the way that they can understand,” Ajder said.

The original article contains 518 words, the summary contains 168 words. Saved 68%. I’m a bot and I’m open source!

I read this title last night and thought it was a story about AI making an amalgamation of MrBeast and Stephen Hawking to shill iPhones

I mean, that’s why I clicked

Can Mr Beast sue til tok over this?

No, can only DMCA takedowns.

If he can find the creator or publisher, he can sue for misappropriation of name and likeness. It’s a privacy tort.

However I think he already said he doesn’t care

Section 230 will absolutely reign here.

No, Section 230 protects TikTok as a platform. He would have to sue the ad creator.

It would be a good time to start a paid online community that has a lengthy vetting process for accuracy and authenticity of all content.

Haha, paid, good one!

It’s either paid by the users or by the advertisers, or do you have a better plan on how to fund running such platform?

Nope, I don’t, otherwise I’d do it because it would be a massive change to whats out there.

I was a bit too short on my comment I admit. I am laughing about the suggestion because making a completely new community with paid access from the start will doom it immediately. It needs to have a certain pull to get users to actually pay something and so far I’d say thats either failed or platforms have not quite taken off.

Same as converting a free community to a paid access model, for that you just need to look at what happens to Twitter. Its a slow bleed but it certainly damaged the platform. Instagram is next it seems with the ad-free tiering that will no doubt lead to obnoxious ads. We will see if they achieved the necessary pull to keep people there or not.

Yes, because SomethingAwful has struggled over the decades so much.

Ok, they have like 200.000 users registered. I know its historically an important site but in terms of userbase that is nothing.

And do they have a lengthy vetting process for accuracy and authenticity in place as you are asking for?

Who said this platform would be the biggest?

You’re making a lot of assumptions here, and it’s coming off as seal-lioning so I’m going to disengage from you here. Cheers.

Well, the thing is that only big platforms struggle with the problems of the topic we discuss here. Smaller platforms just do not have the userbase size where it would be viable to try and scam them for money. Which is why this happens on tiktok in the example up here.

So I just don’t agree with your viewpoint and you don’t with mine. I take no offense to that and I hope you did not either, that was definitely not my intention.

Yes, paid

Guess who wouldn’t be in the target demographic?

Moderation is costly if your dedicated to only putting out accurate and truthful content. And people will pay for that accuracy when the media landscape becomes saturated with AI deep fakes.

Link to video?

Paywalled. :(